Last Week

In the previous article of this Digital Twin series we looked at crowd modelling at the human level. This builds on one of the primary areas of modelling and simulation, being Agent-based Modelling (ABM). In this article we look at the core themes of ABMs and some of interesting challenges faced in building Digital Twins using them.

This Week

CEO/CTO Dr David McKee reflects with the Development Team on the Digital Twin Series so far and doing a deep dive into one of the key practices that makes Digital Twins possible: Agent-Based Modelling.

What is Agent-Based Modelling?

Agent-based Modelling (ABM) models the behaviour of things that move or groups of elements that move collectively. This could be crowds of people, transport, networking systems, or even biological systems.

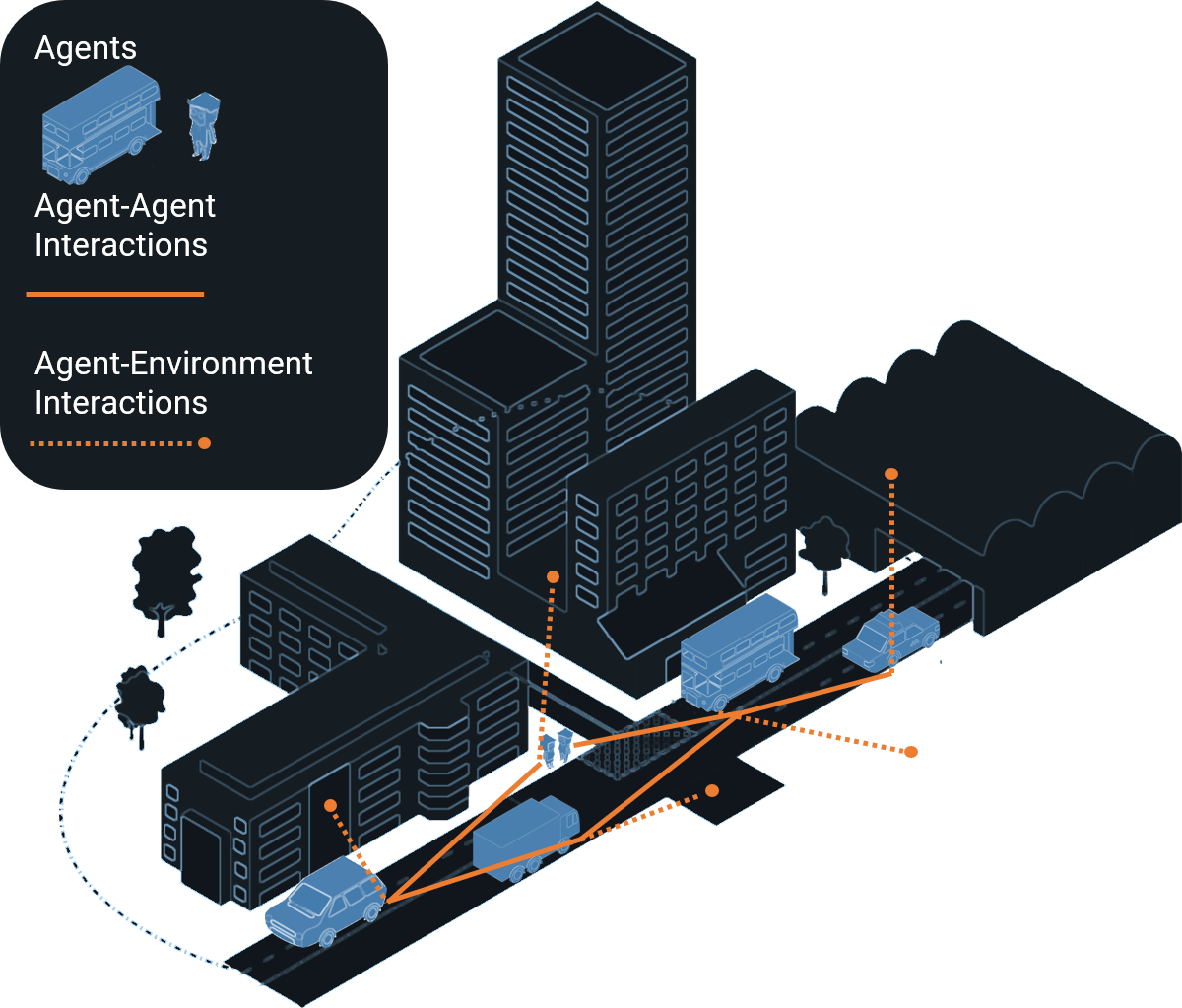

In ABMs there are 2 primary elements of interest:

- Agents that can move around and interact

- A world or environment for them to operate in

What is an Agent?

The “things that move” are referred to as “Agents”. If we consider a chess board to be an ABM then the agents would be the chess pieces, constrained by rules that describe how they can behave and interact, and the board would be the environment.

Another example is that of cars driving around where the cars are the agents and road network is the environment. Or it could be people (agents) moving around a building (the environment).

As mentioned in the last article, the majority of ABM solutions show agent behaviour in terms of dots or circular discs moving around a map. Although the agents are increasingly being visualised with basic human form, they are not much more than a stick man in detail.

Agent-Based Modelling and Digital Twins

ABM is a core part of being able to create accurate and functional Digital Twins as it defines the elements of a system that move around and interact with other parts of the “twin”.

Without this key element of modelling we would be limited to just statistical summaries rather than a system that lives and breathes as if it were our real twin.

In a Digital Twin, the rules of the Agents behaviour need to be informed from the observed behaviour of biology, people, cars, and other moving elements in the real world. With these agent-based rules in place we can begin forecasting behaviour in real-time.

4 Core Themes of ABMs…

Behaviour Modelling: Modelling the behaviour of agents typically uses sets of rules that can be combined to form complex and interesting behaviours. As simulation describes the behaviour of things over time, these behaviours need to be described with time in mind. This is typically achieved by describing behaviour as code, often using a high-level language such as Java, or drawing flowchart type diagrams to describe a sequence of activities. Taking the chess board example, the rules would describe the rules of the game. Each agent would also have a rule set of their own that defines how that chess piece can be played in context with the board’s rules.

Modelling Interactions: This is potentially the most interesting area of ABMs as it requires careful thought in order to ensure accurate simulation results. The synchronisation of elements within the simulation is vital and requires clear and concise descriptions of each interaction. Describing the types of interactions that different agents can have can be challenging. As Dr He Wang outlined in the previous article it is impossible to fully map out the infinite set of all possible actions and interactions so these need to be reduced to appropriate finite sets. However, the act of reducing this set can introduce conscious or unconscious bias into the model, such as defining interactions based on the context of our own age, gender and culture.

-

Scaling: The more detailed a Digital Twin can become, the more elements that need to be added, therefore the larger the model needs to be. Modelling every cell in a human body would require simulation of 37 trillion cells. Scaling the computation remains a challenge and was a major focus of the Slingshot team’s research at the University of Leeds as we look at modelling entire cities or increasingly complex systems in today’s world. For example, if we want to model the behaviour of a city’s population this could be several million agents representing people, a few million cars, as well as other actors in the system, and this needs to be handled in order for Digital Twins to reach their potential.

In order to facilitate large-scale simulation various platforms have been developed. The most notable of these is the IEEE 1516 High-Level Architecture standard for distributed discrete event simulation. This standard implemented by a few providers is heavily used for building virtual training systems within the defence and aerospace sectors. We wrote a review of this and other technologies such as DDS and FMI in a previous paper.

Visualising: Visualising the behaviours in either 2D or 3D space will require further computation, particularly in rendering the movement of agents in a virtual world.

Three great papers that summarise the development of ABMs of the last few decades are:

- Farmer, J. Doyne, and Duncan Foley. “The economy needs agent-based modelling.” Nature 460.7256 (2009): 685-686.

- Railsback, Steven F., Steven L. Lytinen, and Stephen K. Jackson. “Agent-based simulation platforms: Review and development recommendations.” Simulation 82.9 (2006): 609-623.

- K Bentley, H Gerhardt, PA Bates,” Agent-based simulation of notch-mediated tip cell selection in angiogenic sprout initialisation”, Journal of Theoretical Biology, 2008

What Time is it?

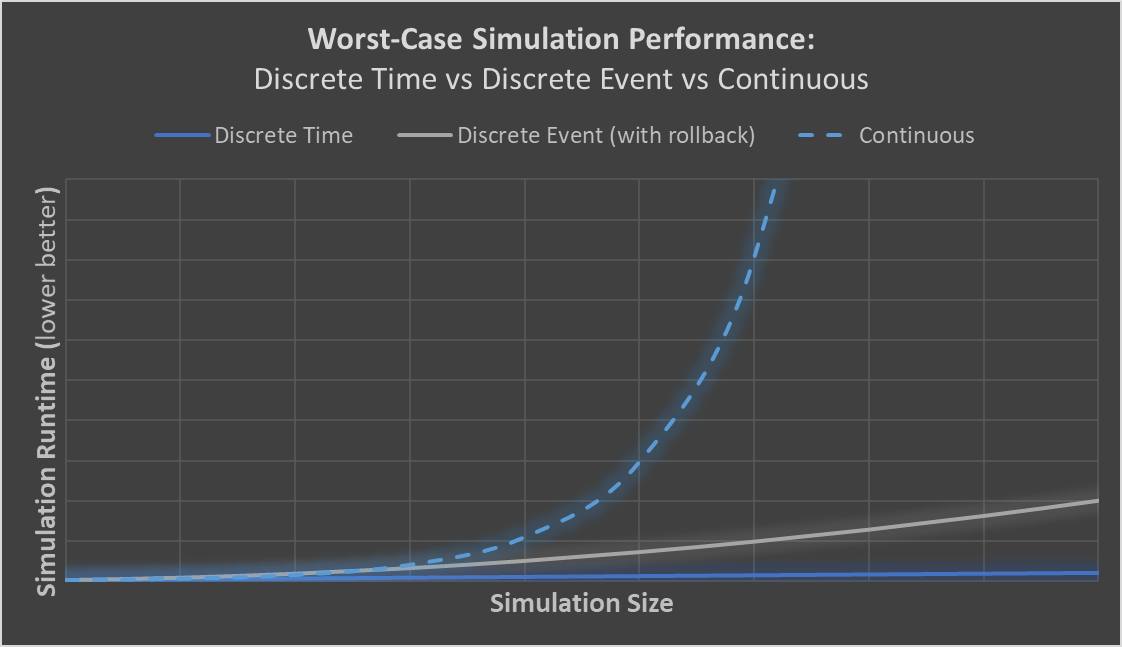

The concept of time underpins all the core themes for Agent-Based modelling, and in fact all Digital Twins. Simulation is at its centre about modelling how things behave over time and it’s crucial that we therefore understand the different aspects of time and how they will shape the data and inform what the Digital Twins end up looking like. With that in mind, time can be segregated into 3 categories:

- Discrete time where the simulation updates at every timestep or clock tick.

- Discrete event where events occur with a timestamp which then need to be ordered appropriately. One computationally expensive challenge is dealing with events that are out of order which may mean doing some re-computation. In these cases, the time warp reverse computation approach is commonly adopted which allows time within the simulation to be rolled back appropriately.

- Jefferson, David R. “Virtual time.” ACM Transactions on Programming Languages and Systems (TOPLAS) 7.3 (1985): 404-425.

- Fujimoto, Richard M. “Parallel discrete event simulation.” Communications of the ACM 33.10 (1990): 30-53.

- Continuous time where behaviour is described with respect to time using ordinary or partial differential equations (ODEs and PDEs). These systems require the use of algorithms that are used to calculate a mathematical set of equations, also known as “solvers”, such as Runge-Kutta or Dormand Prince. Solving these sets of differential equations is incredibly complex and slow.

The following chart shows a rough comparison of the worst-case performance of the different approaches to modelling time. This is based on a typical continuous solver that operates in exponential time with respect to the number of elements being modelled, whilst discrete event systems with rollback will be quadratic time at worst, and straight forward discrete time modelling will be linear.

Note that there have been many techniques developed for optimising the performance of continuous simulations including simplification through reduced order modelling.

Most, though definitely not all, agent-based modelling as previously mentioned falls into the discrete time and event categories.

What’s Next for Agent-Based Modelling?

This article only touches the surface of ABMs but gives an insight as to what is possible with them and what is required in order to achieve it. However, the partnership between Agent-Based Modelling and Digital Twins has still got some mountains to climb and certain challenges that need to be resolved between the two:

- Real-time Processing

- Choosing the right set of Behaviours and Interactions

- Reducing and Understanding Bias

- Visualising Digital Twins at a scale that makes it accessible for all stakeholders, not just engineers

These challenges all need to begin with one solution: collaboration. We hope that this Digital Twin Series sheds light on the fact that it requires experts from every sector to have their say on what a Digital Twin is, what is required to feed it, what methods should be used to go about it and, most importantly, the importance of us sharing our knowledge and data to inform better and more accurate Agents and Digital Twins.