In the Digital Twin Series Finale, CEO/CTO Dr David McKee rounds up the series with a reflection on each of the contributions and lays out what a Digital Twin means to us at Slingshot.

We began this Digital Twin series of interviews and articles with many of the key thought and technical leaders within the Leeds community. We’ve had some great contributions from:

- Prof Richard Romano and Dr He Wang at the University of Leeds

- Zandra Moore CEO of Panintelligence

- Stuart Howie from Avison Young

- Stephen Blackburn from Leeds City Council

- Stephen Perkins recently left Herman Miller

- Will Schaffer from Mercia Technologies

- Slingshot Development Team

Whilst we have come to the end of our series for now, we have come away with a lot more knowledge on Digital Twins and how to go forward with developing this technology, including the challenges we need to overcome.

Discovering the Digital Twin

We started by looking at some of the many definitions for Digital Twins the most prominent being the definitions from the Centre for Digital Built Britain and the Gartner definition:

“A digital representation of a physical asset or the service delivered by it, used to make decisions that will affect the physical asset. Any changes to the physical assets will be reflected in the digital twin.“

“A digital twin is a digital representation of a real-world entity or system. The implementation of a digital twin is an encapsulated software object or model that mirrors a unique physical object, process, organisation, person or other abstraction. Data from multiple digital twins can be aggregated for a composite view across a number of real-world entities, such as a power plant or a city, and their related processes.“

Both of these definitions give a a huge amount of detail but are quite hard to digest.

I personally like the definition of Mirror Worlds that are “Software models of some chunk of reality, some piece of the real world going on outside your window.” Although it’s not very helpful.

In this series I asked each of our experts what they meant by a digital twin. A Digital Twin is…

Prof Richard Romano Humancentric Digital Twins

…a simulation, or virtual prototype, that can act as a baseline situation for virtual prototyping, testing and experimentation.

Dr He Wang Personal Digital Twins

A Digital Twin of a city is where the digital twin of the residents lives a couple of minutes ahead of you in time.

Stephen Blackburn Digital Twins & Decision Making: Leeds City Council

It is a digital version of the real world, where you could easily make changes and see the impact of those changes before you invest any real time, effort, or money in the real world.

Stuart Howie Digital Twins & Decision Making: Urban Regeneration

A virtual testing approach to provide better outcomes for communities, by making smarter investments into places.

Will Schaffer Investing in Digital Twins

It’s a living model that you can play with.

Stephen Perkins End of Open Plan Offices? Smarter Solutions for a Safer Office

A simulation that allows you to do iterative analysis to allow quick redesigns.

Zandra Moore Data Insights, Digital Twins and Dashboarding

A replication of something that you can drive a model or data across in order to manage the risk of applying, or investing in, a physical change.

Our Definition of a Digital Twin

On that basis at Slingshot we have reviewed our own definition to the following:

A digital environment that reflects on, mirrors, and evolves ahead of the physical environment or service to facilitate SMART decision making and storytelling of possible futures.

This supports our vision that Digital Twins should be accessible, understandable, and usable by anyone, rather than restricted to those with data science or software engineering backgrounds. In order for this to be practical, it also needs to be quick to do. For example, if an analysis is needed within 2 weeks, taking 2 months to build a model is not acceptable. This has informed another aspect of our vision:

Rapid Prototyping of SMART Systems using Machine Learning and NO Coding.

How do we move forward?

We’ve got a vision of what we want to achieve, however there are numerous technical challenges in getting there:

- Social challenges in terms of willingness to share data and the trade-off against the risk of liability

- Technical challenges that come with the scale of some of these models whilst trying to make them secure

- Time is always challenge to both build and then run these things

- Cost in every way

But today we’re in a position where these are not insurmountable. At Slingshot we’ve taken an approach to utilise the elastic scalability of Cloud computing with some serious advances in machine learning that allow us to optimise massive Digital Twin deployments of entire cities that can run in real-time and simulate every individual’s journey from home to work and back again whilst considering the details such social distancing between other people on the pavements or looking at the health risks associated with air pollution to a particular individual.

However that is built on some major requirements for data, which is a level of standardisation. By this we do not mean reaching a single fully unified standard for all Digital Twins or even a single standard that fully covers a sector. But rather working towards, as we are doing with both the Digital Twin Consortium and the National Digital Twin Programme, identifying, and understanding small sets of the standards that can become compatible and together provide nearly complete coverage of a sector.

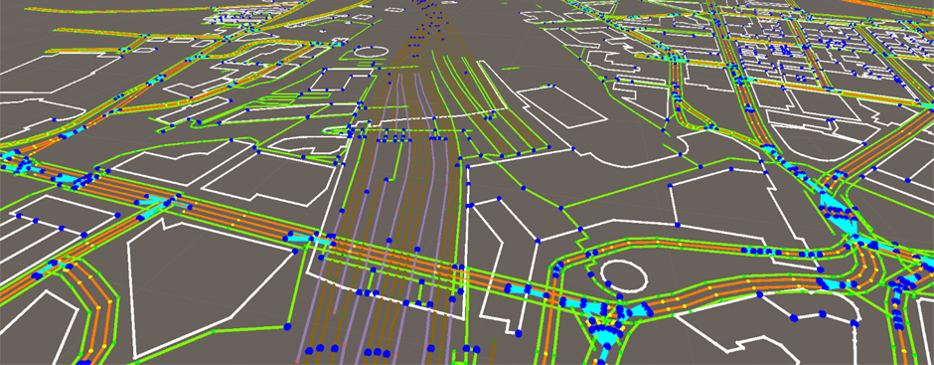

For us at Slingshot this means using a graph-based data model that allows multiple datasets of different formats using either CSV, OpenAPI, IEEE HLA 1516, or some of the most common 3D formats (such OBJ, FBX, etc. that are BIM compliant) to be tied together using a geospatial reference point. In the figure every point whether it be a blue dot, a green line, or the white outline of a building has one or more datasets associated with it that can be queried and turned into something functional and truly useful. In that particular example, in collaboration with Leeds City Council, the Open Data Institute, and the Lloyds Register Foundation, we have data from Open Street Maps, the NASA Shuttle Radar Topography Mission, ANPR CSV inputs, NOMIS employment data, and postcode information (courtesy of Postcodes.io) for every building in the area as well as various private datasets.

Summary

To conclude this series within the Leeds community there are some common themes emerging for the use of Digital Twins across a range of sectors. They are:

- Use of Open Data as a common base from which to build on

- There are significant overlaps between sectors

- Digital Twins need to be able to tell a story

In the last article, Digital Twins for Beginners, we drew out the 5 steps of how to create a Digital Twin. I will amend the third step of that diagram to the following:

In order to achieve this critical element, there remains one major question: what can motivate the private sector (such as ourselves) to release more data to the open for the public good other than altruism. So who pays for this level of openness, what are the business models etc.? For further reading on this I suggest looking up the ODI’s recent report into Data Institutions. I personally believe that there will be a point where open data is the norm and we will need to explain why we are not open but I do think this is several years away. Over the next few months at Slingshot we will be announcing some key benefits that we will be providing our clients who agree to appropriate levels of openness about their data, so stay tuned.